Bridging the many miles across immersive experiences

Immersive content, including virtual reality (VR), augmented reality (AR) and 360-degree video / mixed reality, is still in a relatively early stage in terms of mass adoption. Yet, the prospects for growth are very encouraging as audiences continue to embrace the power of immersive experiences, especially in today’s world of virtual education, virtual meetings, essentially – virtual everything! However, how this content will ultimately flow from source to endpoint (consumer) is still unclear and quite challenging, especially within a fragmented ecosystem of content suppliers and intermediaries, all with differing agendas and technology stacks.

At IBC 2023, one of the most relevant presentations on this subject offered a glimpse into how co-operation and standards-based technologies, alongside detailed test, and measurement, can deliver the valuable knowledge needed to make this next generation of premium content a better consumer experience at scale.

Adding more depth

Immersive content is not yet governed by an industry standard definition, but many agree it defines content with more than one dimension – compared to linear video, which is essentially flat. In addition, immersive also suggests a degree of interactivity from the user. Within this broad definition, we have a host of content that includes virtual and augmented reality through gaming and branching content like the innovative Bandersnatch – a Netflix exclusive movie that allows the viewer to direct the narrative. By its very nature, immersive content is broad in scope. And unlike traditional linear video, it is still a relatively open field regarding market definers, experience attributes, standards, and best practices.

Another characteristic of immersive content is that it tends to be bandwidth-heavy and end-device processor-intensive. To offer a comparison, a standard HD 1080p video streamed via a service like Netflix will consume 5Mbps. Streaming a 360-degree video requires a constant throughput of over 25Mbps to support UHD 4K and over 50Mbps for UHD 8K. In addition, immersive experiences tend to require lower latency – especially for cloud gaming. For example, Nvidia GCloud services recommend 25Mbps, as well as a 40ms latency from the end user to its service – for smooth 1080p, 60Fps gaming.

Although M&E is a key area of interest for immersive content, it also has many other applications, from remote healthcare to manufacturing and beyond.

IBC Show 2023 highlight

For anybody interested in this area, a recent IBC paper: “Optimizing the Virtual Reality Experience Using a 360 View of Client and Network Data” provided a wealth of insight into what we collectively need to do to ensure that networks are prepared and optimized for this new era of content.

Presented by Sanjay Mishra, Associate Fellow, Verizon; Ravid Hadar, VP Products, Compira Labs; ErinRose Widner, Global Head of Business Strategy, Emerging and Creative Technologies, M&E Verizon; and Brian Stevenson, Founder, Openvu.TV – the group evaluated and leveraged tools and workflows to provide the most efficient and best immersive content experience possible.

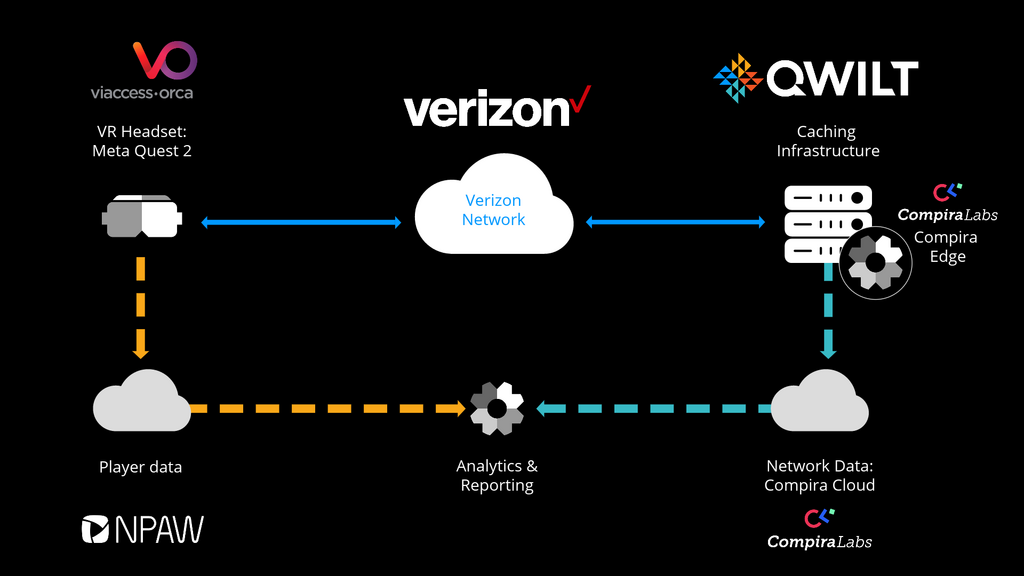

The Proof of Concept (PoC) was hosted within Verizon’s lab and ran on deep caching using Qwilt’s open caching software. VR content played on Meta’s Quest 2 VR player provided by Viaccess Orca with a range of network and client-side analytics tools provided by Compira Labs and NPAW.

One of the goals of the PoC was to increase visibility into the last mile network and maximize VR QoE by selecting the optimal congestion control configuration. From its testing, it hopes to ensure actionable data is translated into Real-Time Network Optimization.

PoC deployment model

PoC deployment model

The PoC tested multiple VR stream formats and encoding methods across varying congestion control methods. The full paper is worth checking out here for the technical detail, but in essence it promoted the view that VR content is extremely sensitive to delivery bandwidth and latency. In addition, the type of congestion control mechanism used showed varying impacts to VR QoE. What was clear, was that visibility into network performance analytics, combined with a view into the end-user QoE, can help achieve an optimal immersive video experience. And finally, that customizing congestion control at the last mile, based on the network condition, helped alter/improve QoE.

Future cooperation

This PoC highlights that successfully delivering immersive video at scale needs more cooperation between partners across the creation and distribution chain. This project, which brought together several SVTA members, highlights how important collaboration will be for the future of immersive content – and how commercialized SVTA specifications such as Open Edge Caching can streamline this evolution.

There are already discussions taking place for another joint project that will hopefully include more participants from within the ecosystem. Ultimately – for technologies like VR, AR and game streaming – building best practices, open specifications and, eventually, standards that work across content, devices and network connectivity, will unlock a whole new realm of content and enable continued industry innovation into the next decade.

RELATED VIDEO

Qwilt Enables the Metaverse

Lisa Aussieker describes the immersive content of tomorrow. Qwilt is ready to help content providers dream (and stream) bigger than ever before possible.

Ready to take the next step?

We have a team of content delivery experts ready to answer your questions.